Any time you’re moving around large amounts of data, it’s going to take time to complete. That’s why the VM Instant Boot feature enables you to virtualize your backup and boot it, without needing to move huge quantities of data.

But there are going to be times where you need to do a full recovery – either locally or from the cloud. Here, we’ll discuss the factors that can bottleneck or influence the speed of backup and recovery.

TL;DR Summary

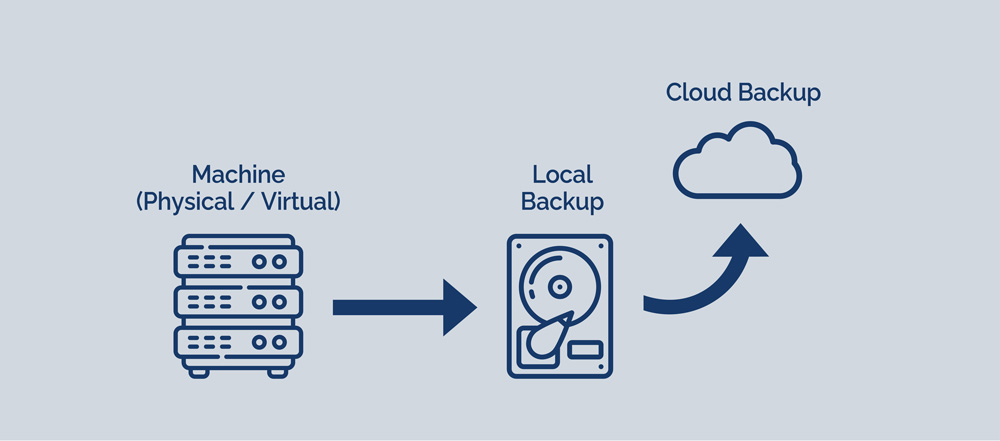

In the Disk To Disk To Cloud model of BackupAssist ER (D2D2C), the bottlenecks are different for Stage 1 (Disk to Disk) and Stage 2 (Disk to Cloud).

The tables summarise the various bottlenecks and their level of influence in overall backup and recovery performance.

Stage 1 (D2D) Factors that affect the speed of local full backup and recovery

| Factor | Level of influence |

|---|---|

| Speed of backup device | High |

| Speed of source drives | Medium |

Stage 2 (D2C) Factors that affect the speed of cloud full backup and recovery

| Factor | Level of influence |

|---|---|

| Speed of network connection to cloud storage | High |

| Having many small files in the backup | High |

| Speed of your cloud storage service | Medium |

| Number of CPU threads / cores on your client machine | Medium |

| Speed of drive you download the cloud backup to | Low |

Introduction

In BackupAssist ER’s D2D2C model, there are two different stages of the backup:

- Stage 1 – the drive image of your source disks to the backup device (disk to disk)

- Stage 2 – the cloud backup from your backup device to cloud storage (disk to cloud)

In the discussion, we’ll consider the first full backup, and a full recovery. On the recovery side, this means:

- Recovering from local backup – a full bare metal recovery, which copies all data from your backup device to your target drives

- Recovering from cloud backup – which downloads your entire backup from the cloud, back to a local device

Local backups (disk to disk) performance factors

The disk to disk portion of the backup in BackupAssist ER is generally very fast.

Speed of backup device – level of influence: high

For most people, the write speed of the backup device is going to be the limiting factor in the D2D drive image.

Most people will use a backup device that is slower than the main drives in the server. Here are some bottlenecks to performance.

Direct Attached Storage

Interface limitations:

- SATA – theoretical limit of 6 Gbps (750 MB/s)

- USB 3.0 – theoretical limit of 5 Gbps (625 MB/s)

- USB 3.1 – theoretical limit of 10 Gbps (1250 MB/s)

- USB 3.2 – theoretical limit of 20 Gbps (2500 MB/s)

Storage device limitations:

- HDD – typical manufacturer’s quoted speeds: 227 MB/s (WD black), 180 MB/s (WD Red)

- If a hard disk already contains data, the level of file fragmentation on the disk becomes extremely relevant.

- SATA SSD – typical manufacturer’s quoted speed: 550 MB/s sustained read & write

- Note: in our testing, we found that many SSDs are unable to maintain the manufacturer’s sustained write speed for long. After several minutes, we found many SSDs would get hot and start thermal throttling, dropping write speeds to less than half.

Network Attached Storage / iSCSI

In addition to the bottlenecks listed for DAS, additional bottlenecks are:

- Gigabit ethernet – theoretical limit of 1 Gbps (125 MB/s)

- Speed of NAS processing unit – a combination of the CPU (often embedded processors) and operating system.

Speed of source drives – level of influence: medium

For a minority of situations, the backup device will be faster than the source device. In that case, the bottleneck will be the source drives.

In addition to the bottlenecks listed above, the source drives are influenced by:

- RAID controller

- Level of data fragmentation on the drives

Cloud backups (disk to cloud)

Speed of network connection to cloud storage – level of influence: high

By far, the biggest influence on speed is the speed of your network connection to the cloud storage provider. For most on-premise situations, that’s limited by the speed of the service provided by your Internet Service Provider.

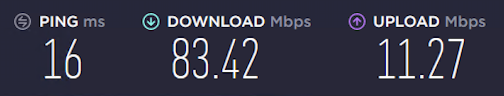

For example, if you have a 100/20 connection, it’s highly likely that you will get close to 20 Mbps of throughput for your backup, and 100 Mbps for your recovery. In an experiment we ran in Jan 2021, we can see the various backup and recovery speeds from an on-premise machine to 3 different public clouds.

Speed of internet connection, as tested by speedtest.net:

Average speed of backup and recovery:

| Operation | Wasabi | Azure | AWS |

|---|---|---|---|

| Backup | 10.6 Mbps | 10.0 Mbps | 10.5 Mbps |

| Recovery | 82.2 Mbps | 79.7 Mbps | 80.2 Mbps |

Note: We’ll share our testing data in a future blog post.

The factors affecting how close your Internet connection gets to its saturation point are:

- The latency between your machine and the cloud service

- The sustained transfer rate between your machine and the cloud service

Latency is important because it affects how much “wait time” there is in between actual data transfers. This means you can expect poorer performance if you are backing up across large distances – such as across countries.

And as shown in the table above, the sustained transfer rate presents the theoretical upper limit for overall average upload and download speed.

Having many small files in the backup – level of influence: high

Let’s take two scenarios – if you have 10 GB of data in one file, versus 10 GB of data in 100,000 files, which will back up and recover faster?

The answer, hands down, is the former – the single file scenario. Let’s see why…

It’s all to do with the “chunking” algorithm in BackupAssist’s cloud engine. It will take each file and split it into deduplicated chunks, with average size of 2 MB. But if a file is under 2 MB in size, then the entire file is stored as one chunk.

In our two scenarios:

- Single file scenario – the file is split into (on average) 5,000 chunks of 2 MB each

- Many file scenario – the files are stored as 100,000 chunks of (on average) 100 KB each.

This means that in our first scenario, BackupAssist will issue 5,000 requests to the cloud storage, but in the second scenario, there will be 100,000 requests. That’s 20 times more!

Each request to the server takes time, and the effect is very noticeable if there is a high latency between your machine and the cloud service.

We do try to mitigate this problem by having many concurrent connections open to the cloud service, but there is no avoiding the fact that many requests to the cloud server will slow things down.

Speed of your cloud storage service – level of influence: medium

In our experimental testing, we tested the speed of various cloud services for backup and recovery, and discovered that the speed of the cloud service does make a difference.

In one experiment that shows the differences the best, we see about a 10% difference between the fastest and slowest cloud on recovery, but a whopping 76% difference on the backup.

| Wasabi | Azure | AWS | |

|---|---|---|---|

| Upload the full system backup | 3h 14h 47m | 5h 42m 24s | 3h 24m 6s |

| 96.6 Mbps | 54.9 Mbps | 92.1 Mbps | |

| Download the cloud backup | 1h 57h 50m | 2h 12m 14s | 1h 59m 41s |

| 224 Mbps | 200 Mbps | 221 Mbps | |

We’ll be publishing the full results of our experimental testing in an upcoming blog post.

Number of CPU threads / cores – level of influence: medium

Assuming that your network connection and the speed of your cloud service are not significant bottlenecks, then the number of CPU threads / cores does affect your backup and recovery speed.

In our detailed experiments with different CPU threads / cores we saw a roughly 30% improvement when doubling the number of CPU threads from 8 to 16. But going beyond 16 threads will not yield a significant performance benefit.

The reasons for this are discussed in that blog article.

Speed of the drive to which you download the cloud backup – level of influence: low

The final factor we’ll discuss today concerns the recovery – and that’s the disk to which you’re recovering. When you download a complete backup from the cloud, your target disk can make a difference.

Let’s assume for now that you have a fast network, fast cloud service, and a 16 thread CPU.

If you recover to a hard drive instead of an SSD, you’ll experience a slowdown, especially if you have huge files in your backup. (And we regard anything over 20GB to be a huge file… and the bigger the file, the more potential slowdown you might experience.)

The reason for this is that BackupAssist has to download chunks and then reassemble them to recreate the original files. The catch is that we cannot predict in advance what order these chunks will arrive… in fact, most of the time they arrive out of order. Thus, we often have to write chunks to temp files on disk, and then rewrite them “in place” in the final destination when contiguous chunks become available.

This can cause a considerable amount of Disk I/O as data gets written to, read from, and rewritten to the same disk.

On SSDs, this effect is barely noticeable, but on spinning hard drives, it can result in a slowdown.

We’ll publish results on this effect shortly. For most situations, the speed of the target disk is not a significant factor, but it can show up if there are no other bottlenecks in the system and your backups contain huge files.

Conclusions

BackupAssist ER can provide extremely high performance backup and recovery in environments without significant bottlenecks.

However, every system will have limitations – many stemming from underlying budgetary or practical considerations.

Understanding the bottlenecks can give you an understanding of what to expect from BackupAssist ER in your environment, and a guide on how to get the best recovery performance with the resources at your disposal.