TL;DR summary

- Remote files – that is, files stored not locally, but on machines accessible via the internet – can include websites, databases, and logs.

- Any machine that’s remotely accessible is a target for hacking. For example, websites are commonly hacked, and data can be deleted or held to ransom.

- User errors are another source of data loss.

- Backups are necessary to ensure business continuity. There are two common methods – “push” and “pull”, which describe whether backup data is pushed (uploaded) to offsite storage or pulled (downloaded) to a separate system.

- There are pros and cons of each method. However, from a cyber security viewpoint, the “pull” method has several important advantages.

- BackupAssist 365 can help you achieve reliable and secure backups for remote files via the SFTP interface.

The rise of hacking cloud servers

There seems to be another high-profile hack reported in the news every week – to the point where we can become immune to the problem as it happens to “someone else”.

For example, WordPress is the world’s most popular web CMS, and there are a whole series of blog articles dedicated to explaining various ways to hack it. The WPHackedHelp blog details 13 common ways WordPress is hacked, from XSS, SQL, backdoor injection, and spoofing, just to name a few.

There is a constant debate as to whose responsibility it is to secure cloud hosted systems. Is it your responsibility, or the cloud provider’s?

We think the most helpful way is to think of it as a joint responsibility. When you drive on a road, it is your responsibility to drive on the correct side, just as it is the responsibility of oncoming traffic to do the same. But if the oncoming traffic makes a mistake, you are the one that must take evasive action – and that’s where your anti-lock brakes, stability control, seat belts, and other safety features may just save your life.

So yes, your cloud provider should do backups, but it’s also your responsibility to protect your assets.

In our own experience, many years ago we suffered a root level compromise of one of our web servers, due to a vulnerability in the Linux kernel. Luckily, no harm was caused because it was detected early enough, but such events could happen to any business.

User errors – another source of data loss

We are a little embarrassed to admit this (as a backup company), but we have suffered a permanent loss of data worth roughly $50,000.

How did this happen?

Over a decade ago, we had an online marketing lead database that was stored in MySQL. This custom database stored leads from print advertisements and trade shows – which we had paid over $50,000 to acquire. All this was stored on our web server, in a virtual private server hosted by a top-tier host.

A developer, while making some database modifications, thought he was connected to a development environment and ran a “drop table”. However, he typed the command into the wrong Terminal window, which was connected to the production server instead. Oops.

Our web host tried to recover the data from their backups, but was unable to do so, due to some infrastructure rework they were doing at the time. It was a particularly bad and unlikely coincidence.

Mistakes like these unfortunately do happen – even to the best of us.

Backups are the answer – and you can either push or pull.

We all know that with good backups you can get your systems running again. Backups are a 2nd, 3rd, 4th, copy of your data that you can rely upon, in case your primary copy gets destroyed.

There are two common architectures for this – push and pull – which refers to how you get data offsite onto a different network or storage system.

- Push: the system pushes (uploads) the backup to a separate storage for safekeeping.

- Pull: an external system logs in and pulls (downloads) the remote files.

Both are commonly used, and assuming no nefarious interference, both architectures work. So how do you choose?

Push vs. pull – which is better from a cyber security perspective?

To answer this question, we must consider what security breaches have to occur in order for both the primary system and all backups to be destroyed or deleted.

We have to assume that the primary system has been compromised, and the hacker has root level access. Under this system, the push architecture has some flaws which, if unaddressed, could result in the backups being rendered useless.

| Method | Pros | Cons |

|---|---|---|

| Push | Backup data is stored on a separate system, usually offsite. |

Backups can be disabled by a hacker with a single point of compromise.

Backups may be vulnerable to deletion by a hacker with a single point of compromise. |

| Pull |

Backup data is stored on a separate system, usually offsite.

Backups are scheduled on a separate system. Deleting the backups requires multiple points of compromise. It’s easy to pull the backups to multiple locations for extra resilience. |

In a push architecture, backups are commonly pushed offsite via scripts, scheduled via cron. In order to log into remote storage – such as NFS, SFTP, S3, Dropbox, or Google Drive, some form of credentials are needed – and these credentials need to be stored somewhere. This leads to two major problems:

- Cron jobs often go unmonitored and can be disabled without anyone noticing.

- If scripts are used to upload files to remote storage, then a hacker can often use the same tools to delete files on remote storage.

The only way a push architecture can be cyber secure is if both problems are mitigated – such as by using immutable storage.

In a pull architecture, backups are created by downloading files from the remote system. Therefore, scheduling this operation and storing the backups are done on a different system – meaning that a hacker will need to successfully breach multiple systems in order to harm the backups.

A pull architecture can be implemented using scheduled scripts, or by using a backup software package like BackupAssist 365.

How to set up BackupAssist 365 to pull files from remote servers via SFTP

It is very simple to set up a backup system to pull files from a remote server using the Secure FTP protocol. This is a file transfer protocol built on top of SSH – and the good thing is that SSH is ubiquitous and available on most cloud hosting platforms.

This functionality is currently in beta, and we anticipate a full release by August 2021.

To set this up, follow these steps:

- Download and install BackupAssist 365.

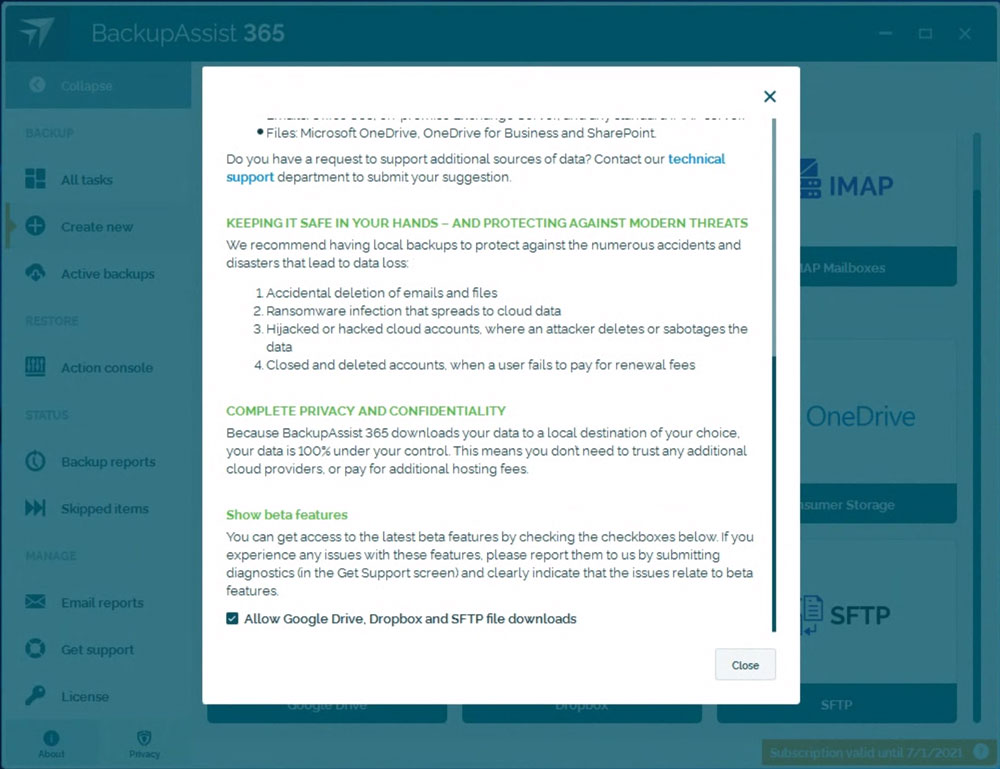

- After inputting your 30-day trial key, click on the “About” tab, scroll to the bottom, and click on the checkbox that reads “Allow Google Drive, Dropbox, and SFTP file downloads”. This will enable SFTP compatibility.

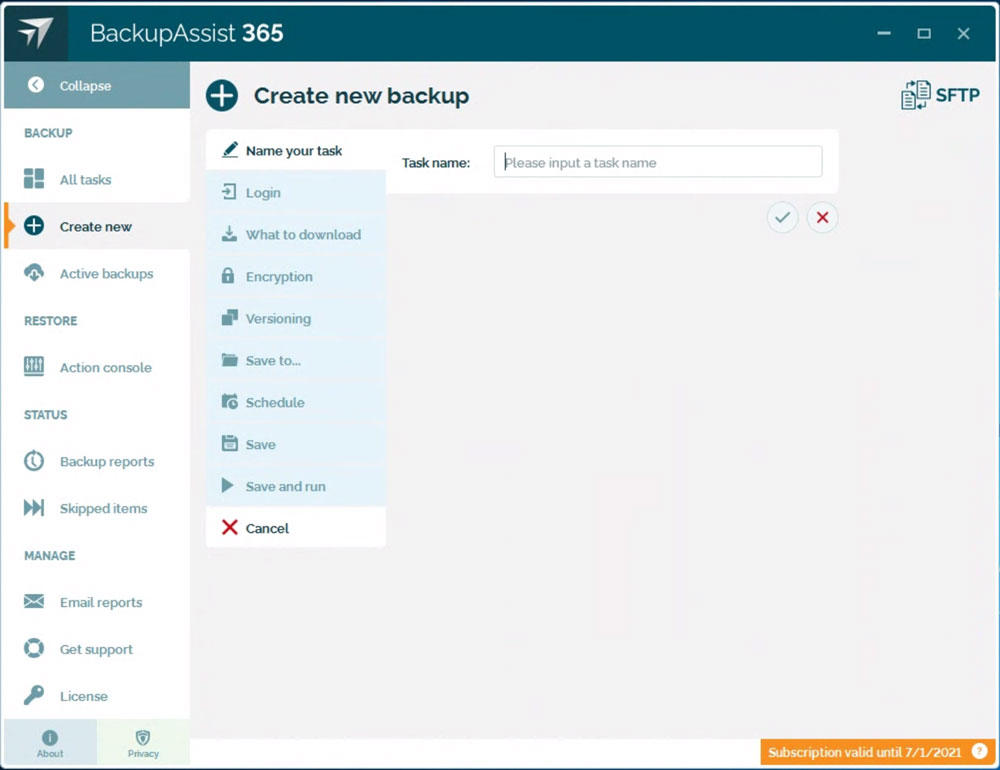

- Set up your SFTP backup task by clicking on “Create new”, and then “SFTP”. You can then specify the hostname, and a username/password combination to log into your remote system.

- The remainder of the setup should be self-explanatory. Versioning is recommended if you want to keep backup history, while encryption is recommended if you’re backing up health data or Personally Identifiable Information, and are subject to privacy laws like HIIPA and GDPR.

Here are some best practice hints:

- You should create a new user login into your remote system – this enables you to segregate access between regular operations and your backups.

- By default, you can back up items in the user’s home directory. If you wish to back up files and directories that reside elsewhere, you can create a symbolic link to those files and folders.

- It’s a good idea to grant read-only access to the new user login, so that there is minimal security risk introduced with this new user. When done properly, the risk should be near-zero.

- You may additionally choose to add firewall rules to only allow SSH access from your backup machine.

Although much of this article has mentioned backing up websites, the same principles can apply to backing up transaction logs, database dumps, and router logs.

Conclusion

We believe that a pull method for backing up remote files is an excellent way to achieve cyber resilience.

In the coming months, we’ll build upon this article to present examples of how to use BackupAssist 365 in various practical scenarios.